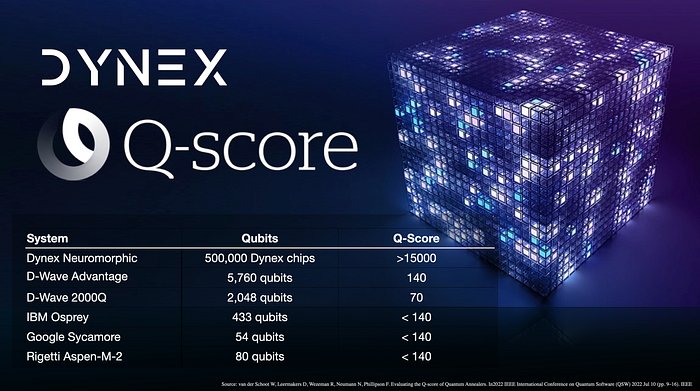

Benchmarking the Dynex Neuromorphic Platform with the Q-Score

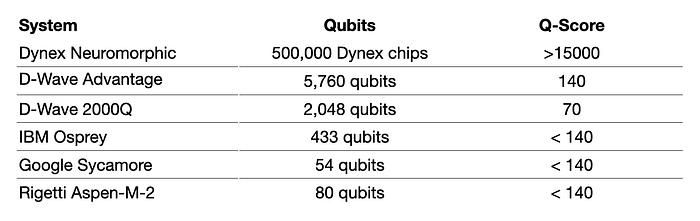

In the context of our study, we employ the Q-score to benchmark the computing capabilities of the Dynex Neuromorphic Platform, facilitating a comparative evaluation with contemporary state-of-the-art quantum computers. Our findings demonstrate that the Dynex platform exhibits remarkable performance superiority over the presently largest quantum computing systems. While physical quantum computing systems such as Google’s Sycamore, IBM’s Osprey, D-Wave’s Advantage, and Rigetti’s Aspen-M-2 have reportedly achieved Q-scores not surpassing 140, the Dynex Neuromorphic Platform has demonstrated a Q-score exceeding 15,000.

The Q-score, introduced by Atos in 2020, serves as a metric designed to assess the efficiency of executing a representative quantum application. It gauges a system’s proficiency in addressing practical, real-world challenges rather than merely evaluating its theoretical or physical performance. Serge Haroche, Nobel Laureate in Physics 2021, member of French academy of Sciences Emeritus Professor at College de France and member of Atos Quantum Advisory Board explains the importance of an independent metric to measure the efficacy of a quantum device:

With the development of competing NISQ technologies, a quantitative figure of merit in order to compare as objectively as possible the performances of various machines becomes an interesting information for potential users or buyers. This is what Q-score tries to achieve, by assigning a number to each device.

In the world of cutting-edge computing, two revolutionary technologies are making waves: neuromorphic computing and quantum computing. While they appear distinct at first glance, there are intriguing similarities and complementary features that have researchers excited about the possibilities when these two fields converge.

Quantum Computing

Quantum computers hold immense promise for revolutionising computing and solving complex problems that classical computers struggle with. However, it’s important to note that, quantum computers face several key limitations that make them too small and impractical for handling real-world problems at scale for the following reasons:

Quantum Bit (Qubit) Stability: Quantum computers rely on qubits, which are highly sensitive to external factors like temperature and electromagnetic interference. Maintaining the coherence and stability of qubits as a system scales up remains a significant challenge. This makes it difficult to create large-scale quantum computers with sufficient qubits to tackle real-world problems.

Error Correction: Quantum computers are prone to errors due to the fragile nature of qubits. Error correction in quantum computing is complex and requires a significant number of qubits. To date, creating a robust quantum error correction system has been a formidable challenge.

Quantum Volume: Quantum volume is a measure of a quantum computer’s capabilities, considering factors like qubit count and error rates. While there has been progress in increasing quantum volume, it is still far too small to address many practical problems effectively.

Scalability: Building large quantum computers with thousands or millions of qubits, which would be necessary for handling real-world problems, is technically demanding and costly. The current state of quantum technology is not yet capable of creating such massive systems.

Quantum Hardware Infrastructure: The infrastructure required to house and maintain quantum computers, including cooling systems and ultra-low temperatures, makes them impractical for most real-world environments.

Limited Access: Access to quantum computers is limited, and only a few organizations and researchers have the resources and expertise to utilize them effectively. This further restricts their practical application.

While quantum computers have made significant strides in recent years, they are still in the early stages of development. Researchers and engineers are actively working on overcoming these limitations. As quantum technology advances, it holds great promise for solving real-world problems, but it may be some time before they become large and stable enough to handle the full range of challenges that classical computers currently address.

Constant Innovation in Quantum Algorithms

The incredible potential of quantum computers lies not only in their hardware but also in the algorithms that run on them. Quantum algorithms are purpose-built to leverage the unique abilities of quantum computers and provide solutions to complex problems. Researchers from around the world are actively engaged in developing and refining these algorithms. Here’s why new algorithms for quantum computers are continually emerging:

Evolving Quantum Hardware: Quantum computers themselves are rapidly evolving. As more powerful quantum processors become available, algorithms must adapt to exploit their increased capabilities.

Algorithm Optimization: Researchers are constantly working to optimize existing algorithms, making them more efficient and practical for real-world applications. This iterative process leads to the creation of improved algorithms.

New Application Domains: As quantum computing matures, it extends into new application domains. Algorithms are custom-tailored to address specific challenges in fields like cryptography, material science, finance, and machine learning.

Research Collaboration: The quantum community is highly collaborative, with researchers sharing their findings and working together to refine algorithms. This collective effort accelerates the development of new algorithms.

Quantum Supremacy: Achieving quantum supremacy, where quantum computers outperform classical counterparts, is a driving force behind algorithm development. This milestone encourages the creation of algorithms to demonstrate quantum computing’s superiority.

Practical Applications: Quantum algorithms are continually evolving to address real-world problems. For example, quantum chemistry algorithms are advancing drug discovery, while optimization algorithms enhance logistics and supply chain management.

One of the most famous quantum algorithms is Shor’s algorithm, known for its ability to factor large numbers exponentially faster than classical algorithms. This poses a potential threat to current encryption methods, driving research into post-quantum cryptography.

Grover’s algorithm is another notable quantum algorithm. It’s designed to search unsorted databases faster than classical counterparts, which has implications for data retrieval and optimization.

Variational quantum algorithms, including the Quantum Approximate Optimization Algorithm (QAOA), are on the rise for solving optimization problems. These algorithms are crucial for applications like portfolio optimization, route planning, and machine learning.

Quantum computing is a field of constant innovation, where the development of new algorithms is at the forefront of progress. These algorithms harness the extraordinary capabilities of quantum computers, offering solutions to problems that have long perplexed classical machines. With quantum hardware evolving and a collaborative global research community, we can expect to see an ever-expanding array of quantum algorithms that open doors to exciting new possibilities in computing and science. As quantum technology continues to advance, it’s only a matter of time before these algorithms reshape industries and bring about transformative changes in our digital world.

Neuromorphic Computing

Neuromorphic computing draws inspiration from the architecture and functionality of the human brain. It’s all about mimicking the brain’s neural networks and cognitive processes to create computers that think and learn like humans. At its core, neuromorphic computing processes information in a fundamentally different way compared to classical computers. Instead of relying on sequential processing, it harnesses parallelism, adaptability, and self-learning capabilities.

Neuromorphic computing uses hardware based on the structures, processes and capacities of neurons and synapses in biological brains. The most common form of neuromorphic hardware is the spiking neural network (SNN). In this hardware, nodes — or spiking neurons — process and hold data like biological neurons.

Both neuromorphic and quantum computing rely on parallelism to process information. Neuromorphic systems, inspired by the brain’s neural networks, process vast amounts of data simultaneously, mimicking the brain’s ability to manage parallel tasks effortlessly. Quantum computers utilise qubits to explore multiple states in parallel, which is at the heart of their extraordinary computational power.

Neuromorphic and quantum computing, though distinct in their inspirations and goals, share fundamental traits that hint at a future of convergence. The development of neuromorphic quantum computing promises to be a game-changer in the world of artificial intelligence, optimization, and scientific research, opening up avenues that were once unimaginable. As researchers continue to explore the synergies between these fields, the journey toward a more powerful and capable future of computing is well underway. The possibilities are boundless, and the potential to bridge the worlds of minds and matter holds the key to unlocking the next era of computing.

Dynex Neuromorphic Platform

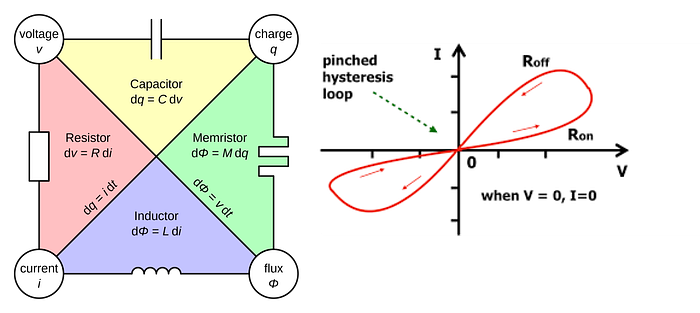

Utilising a decentralised network of (currently) more than 600,000 contributing GPUs, the Dynex Neuromorphic Platform provides supercomputing capabilities based on a proprietary neuromorphic circuit design, utilising memristors, short for “memory resistors,” which were first theorised in the 1970s by Leon Chua. The synergy of memristors and neuromorphic computing is setting the stage for a revolution in artificial intelligence, data processing, and energy-efficient computing. As memristor technology advances and researchers refine their applications in neuromorphic systems, we’re moving ever closer to achieving the dream of computers that can learn, adapt, and think in ways that mimic the incredible complexity of the human brain. With this convergence, we’re not just building more powerful computers; we’re creating systems that have the potential to revolutionise a multitude of industries and enhance our understanding of how intelligence and learning work.

A memristor (/ˈmɛmrɪstər/; a portmanteau of memory resistor) is a non-linear two-terminal electrical component relating electric charge and magnetic flux linkage. It was described and named in 1971 by Leon Chua, completing a theoretical quartet of fundamental electrical components which also comprises the resistor, capacitor and inductor.

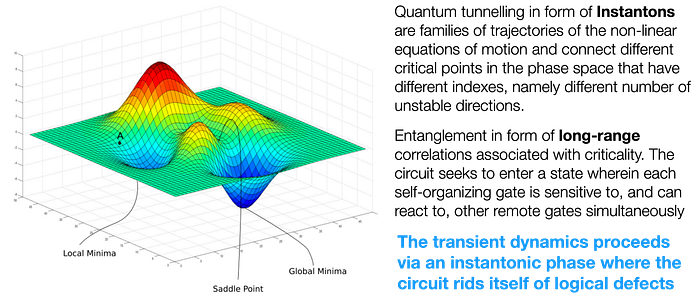

Dynex’ Neuromorphic system can be realised physically as a non-linear dynamical system, which is composed of point attractors that represent solutions to any problem. Therefore it is possible to numerically integrate its equations of motion, since they are non-quantum systems. Graphic Processing Units (GPUs) are ideally suited to perform such integration task.

The circuit design used on the Dynex platform supports superposition of input- and output signals, allowing the circuit to operate bi-directional. This allows the system to perform highly effective computations which are usually only possible by the superposition capability of qubits.

Subsequently, by employing topological field theory, it was shown that the physical reason behind this efficiency rests on the dynamical long-range order that develops during the transient dynamics where avalanches (instantons in the field theory language) of different sizes are generated until the system reaches an attractor. The transient phase of the solution search therefore resembles that of several phenomena in Nature, such as earthquakes, solar flares or quenches.

The Dynex Neuromorphic Chip utilises GPUs to achieve close to real-time performance of a physical realisation of the Dynex chips and is used to solve hard optimization problems, to implement integer linear programming (ILP), to carry out machine learning (ML), to train deep neural networks or to improve computing efficiency generally.

Dynex’ Q-Score

To benchmark the efficacy and performance of the Dynex Neuromorphic platform, the Q-score is being measured. The main advantage of the Q-score is that it predicts the efficacy of quantum devices in a real-world application. Furthermore, the Q-score can be computed for any computational device and hence allows a direct comparison between quantum and classical computers.

In 2021, Atos introduced the Q-score [1]. They set out to define a metric that is simultaneously application-centric, hardware-agnostic and scalable. Furthermore, the Q-score is given by a single number, where a higher Q-score implies that a device has a higher performance. This makes the Q-score accessible to the public as well.

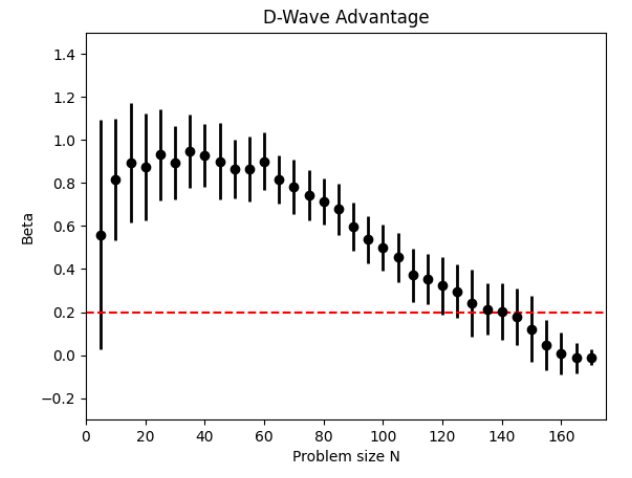

Atos proposes to approximately solve the Max-Cut problem for large graphs as a representative application for quantum devices [1]. They define the Q-score of a computational approach as the size of the largest graph for which the setup can approximately solve the Max-Cut problem with a solution that significantly outperforms a random guessing algorithm. For general purpose quantum computers, they propose to use the Quantum Approximate Optimisation Algorithm (QAOA) [2], as they view it as representative of practical needs of industry and challenging for current hardware.

By trying to find a maximal cut for multiple graphs of size N on a certain device, we can compute the average best cut C(N) for graphs of size N obtained by this device. We define the Q-score to be the highest N for which a setup can find a solution that is on average significantly better than a random cut. Specifically, the largest N for which β(N) is larger than β = 0.2

This approach can also be efficiently measured on the Dynex Neuromorphic platform, too and allows us to benchmark the Dynex platform. To measure Dynex’ Q-score, we are using the original package for computing the Atos Q-score for our computations. We start by importing the Dynex SDK package and other requirements:

import dynex

import dimod

from pyqubo import Array

import networkx as nx

from collections import defaultdict

import numpy as np

from datetime import datetime

import pickle

from dimod import BinaryQuadraticModel, BINARY

from tqdm.notebook import tqdmThe only required adaption to the reference package is to integrate the Dynex SDK sampling function, which we are specifying as followed:

def run_job(size, depth = 1, seed=None, plot=False,

strength = 10, generate_random = False,

num_reads = 500000, annealing_time = 1000,

debug = False):

"""

(Re)Implementation of Ato's generate_maxcut_job() function as specified in job_generation.py. It

generates a randum Erdos-Enyi graph of a given size, converts the graph to a QUBO formulation and

returns the two sets as well as the number of cuts (= energy ground state) to be consistent with the paper

Parameters:

-----------

- size (int): size of the maximum cut problem graph

- depth (int): depth of the problem

- seed (int): random seed

- plot (boolean): plot graphs

- strength (int): weight of qubo formulations' edges

- generate_random (boolean): implementation of a random assignment, can replace 0.178 * pow(size, 3 / 2) from paper

Returns:

--------

- Set 0 (list)

- Set 1 (list)

- maximum_cut result (int)

- random_cut result (int)

"""

# Create a Erdos-Renyi graph of a given size:

G = nx.generators.erdos_renyi_graph(size, 0.5, seed=seed);

if debug:

print('Graph generated. Now constructing Binary Quadratic Model...')

if plot:

nx.draw(G);

# we directly build a binary quadratic model (faster):

_bqm = BinaryQuadraticModel.empty(vartype=BINARY);

for i, j in tqdm(G.edges):

_bqm.add_linear(i, -1 * strength);

_bqm.add_linear(j, -1 * strength);

_bqm.add_quadratic(i,j, 2 * strength);

if debug:

print('BQM generated. Starting sampling...');

# Sample on Dynex Platform:

model = dynex.BQM(_bqm, logging=False);

sampler = dynex.DynexSampler(model, mainnet=True, description='Dynex SDK test', logging=False);

sampleset = sampler.sample(num_reads=num_reads,

annealing_time=annealing_time, debugging=False);

cut = (sampleset.first.energy * -1 ) / strength;

# Random cut?

r_cut = -1;

if generate_random:

random_assignment = list(np.random.randint(0, 2, size))

r_assignment = dimod.SampleSet.from_samples_bqm(random_assignment, _bqm)

r_cut = (r_assignment.first.energy * -1 ) / strength;

if plot:

draw_sampleset(G, sampleset)

return cut, r_cutThe Erdos-Renyi graph is being converted to a Binary Quadratic Model and then sampled on the Dynex platform using the Ising/QUBO sampler functionality. In [3], the authors performed measurements of Q-Scores for different samplers and systems, including Quantum computers D-Wave 2000Q and D-Wave Advantage. They also concluded Q-Scores for gate based quantum computers (IBM, Google, and others) not to exceed the D-Wave Advantage Q-score. The metric is being determined as the highest problem size N which results in a Beta of less than 0.2. The following Graph shows the evolution of Beta versus increasing N for the D-Wave Advantage quantum computer [3]:

At N > 140, the achieved Beta falls below the threshold of 0.2, indicating that the D-Wave Advantage system (5,760 physical qubits) has a Q-score of 140, which is currently the largest Quantum computer existing at time of writing this article.

Results

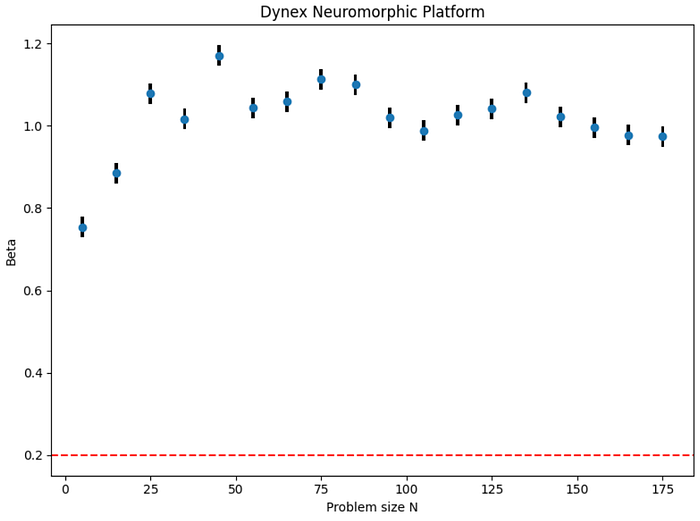

To benchmark Dynex against the Quantum computers measured in [3], we computed the Q-score for Dynex by measuring Beta for N values starting from 5 up to 175 in increments of 10 by using 500,000 Dynex chips with a maximum of 300 integration steps. The authors in [3] allowed a limit of 60 seconds for computations, in our case each calculation required only a few seconds with set number of integration steps. Note that higher Beta equals better results.

The Dynex Platform consistently computes Beta ≈ 1.0 which confirms that Dynex’ Q-score is in a much higher range and also confirms the rationale that Neuromorphic computing provides quantum like computing performance but without its limitations.

We conducted a comprehensive examination, extending our analysis to accommodate larger problem sizes denoted as ‘N.’ Our investigations consistently revealed that the Dynex platform consistently produces a Beta value exceeding the critical threshold of 0.2.

Running for n=15000, N = 15000

Score: 16358780.88. Random best score: 327006.88. Success. beta = 50.02580031343683Our observations suggest that the primary limiting factor pertains to the Python environment utilised. Specifically, when subjected to a randomly generated Erdos-Enyi graph of considerable magnitude, with a size of 15,000, the ensuing Binary Quadratic Problem exhibits in excess of 56 million constraints. Notably, the size constraint becomes apparent with larger problem instances, impeded by the confinement of a 2 GB size limit. In summary, our findings support the assertion that the Dynex Neuromorphic Platform attains a Q-score surpassing 15,000.

We hoped you enjoyed reading this article. Source code for the Q-Score calculation is also available on our GitHub repository. If you want to learn more, visit the Dynex SDK Wiki or browse the Dynex SDK Documentation. You can also get in touch with us on one of our channels.

Further Reading:

- https://dynexcoin.org

- https://github.com/dynexcoin/DynexSDK/wiki/Welcome-to-the-Dynex-Platform

- https://github.com/dynexcoin/DynexSDK/wiki/Workflow:-Formulation-and-Sampling

- https://github.com/dynexcoin/DynexSDK/wiki/What-is-Neuromorphic-Computing%3F

- https://github.com/dynexcoin/DynexSDK/wiki/Solving-Problems-with-the-Dynex-SDK

- https://github.com/dynexcoin/DynexSDK/wiki/Appendix:-Next-Learning-Steps

References

[1] S. Martiel, T. Ayral, and C. Allouche, “Benchmarking quantum coprocessors in an application-centric, hardware-agnostic, and scalable way,” IEEE Transactions on Quantum Engineering, vol. 2, p. 1–11, 2021. [Online]. Available: http://dx.doi.org/10.1109/TQE.2021.3090207

[2] E. Farhi, J. Goldstone, and S. Gutmann, “A quantum approximate optimization algorithm,” arXiv preprint arXiv:1411.4028, 2014.

[3] van der Schoot W, Leermakers D, Wezeman R, Neumann N, Phillipson F. Evaluating the Q-score of Quantum Annealers. In2022 IEEE International Conference on Quantum Software (QSW) 2022 Jul 10 (pp. 9–16). IEEE.

![Dynex [DNX]](https://miro.medium.com/v2/resize:fill:128:128/1*AbAXB_y5DEv8IrXdFkgdTw.png)